Solving recruiter overwhelm and talent invisibility by transforming how job applications are screened and qualified.

Role

Lead Designer

Scope

End-to-end: Concept to Delivery

Launch

Pilot → 100% in 3 mos.

Company

HeyJobs

Overview

tl;dr

HeyAssessment is an AI-powered conversational chat that enriches job applications after submission. It asks job-specific follow-up questions - adapting to each role, skipping what's already known, and probing deeper on open-ended answers - turning sparse applications into structured profiles. Launched under extreme time pressure, we shipped without formal user testing to capture immediate business impact, then iterated post-launch.

Context

Why Assessment?

HeyAssessment was designed to improve hiring success by ensuring that candidates who are qualified, interested, and reachable are accurately presented to recruiters. While we couldn't change a talent's qualifications, our primary goal was to ensure they are effectively captured, structured, and supplemented with job-specific information to reduce recruiter screening efforts.

For Recruiters

For Talent

As Principal Designer, I led the design of the HeyAssessment chat interface, owning the full design process from concept through delivery.

PROBLEM

A Two-Sided Challenge

Recruiters faced overwhelming numbers of unqualified applicants and struggled to reach quality candidates due to missing contact details, incomplete profiles, and skill mismatches. Candidates reported slow processes, lack of communication, and frequent ghosting.

The opportunity: collect richer, more useful information right after someone applies, specific to the job they applied for, in a way that feels like a natural next step, not another form.

Users

HeyAssessment had to work for two very different audiences.

Strategic challenge

Increased hiring volume

Reduced cost-per-hire

Recruiter efficiency

For the candidate experience, the focus was on user friendly design - creating a streamlined, frictionless path to completion.

Process

Tone of Voice & Branding

I explored multiple brand directions and narrowed them down to three finalists. Route 1: AI Recruiter leaned professional and functional; Route 2 - HeyJobs Assistant was effortless and friendly; Route 3: HeyJobber was engaging and playful. Route 2 was recommended to stakeholders - it struck the right balance between approachability and trust, and aligned with HeyJobs' existing brand voice, which had already been validated with users.

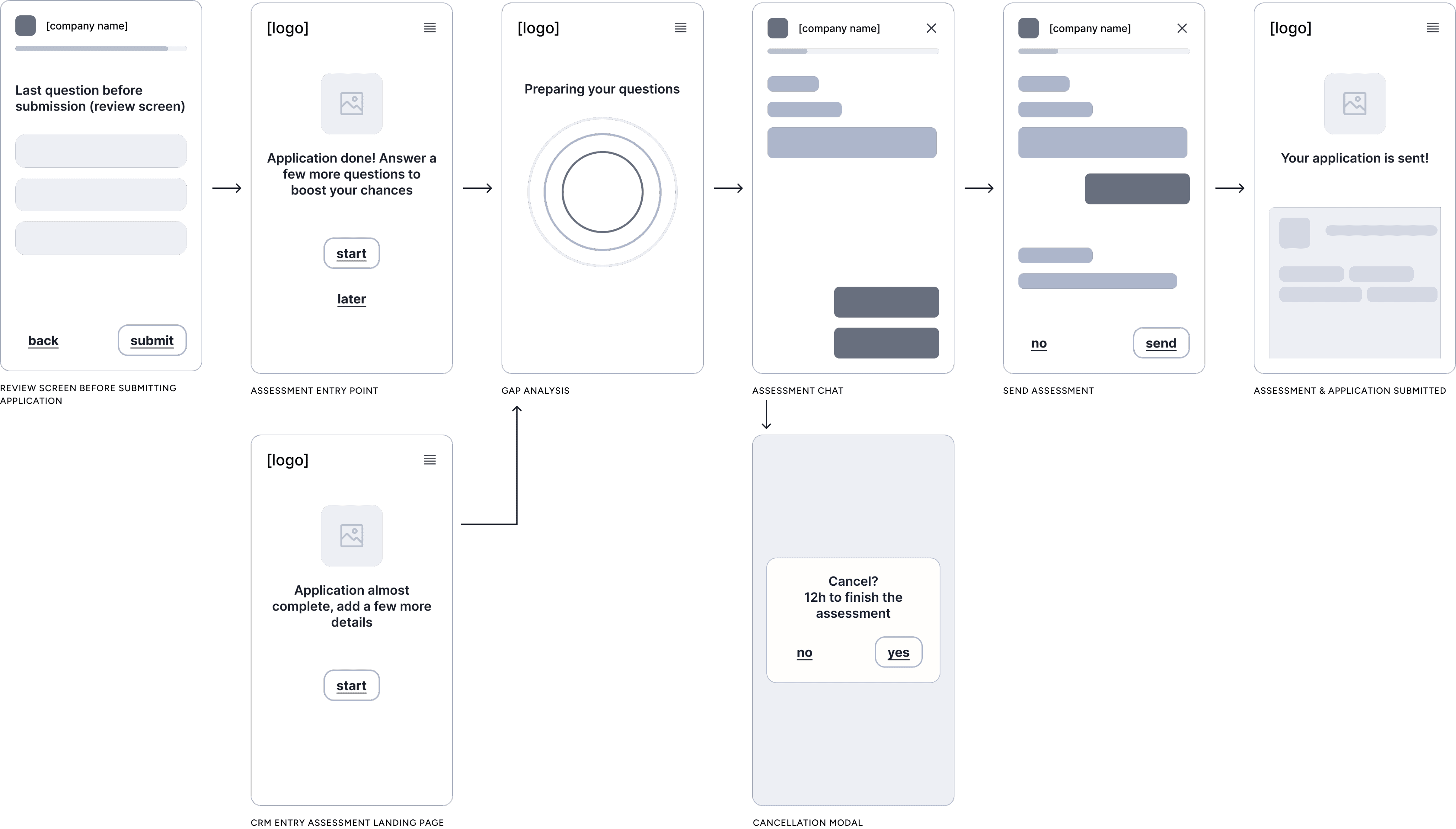

Wireframes

Wireframes were primarily used to align with stakeholders on the core flow and key touch-points - setting shared expectations before moving into detail. Multiple layout approaches were explored and discarded along the way as the interaction model took shape.

Interaction design & UI exploration

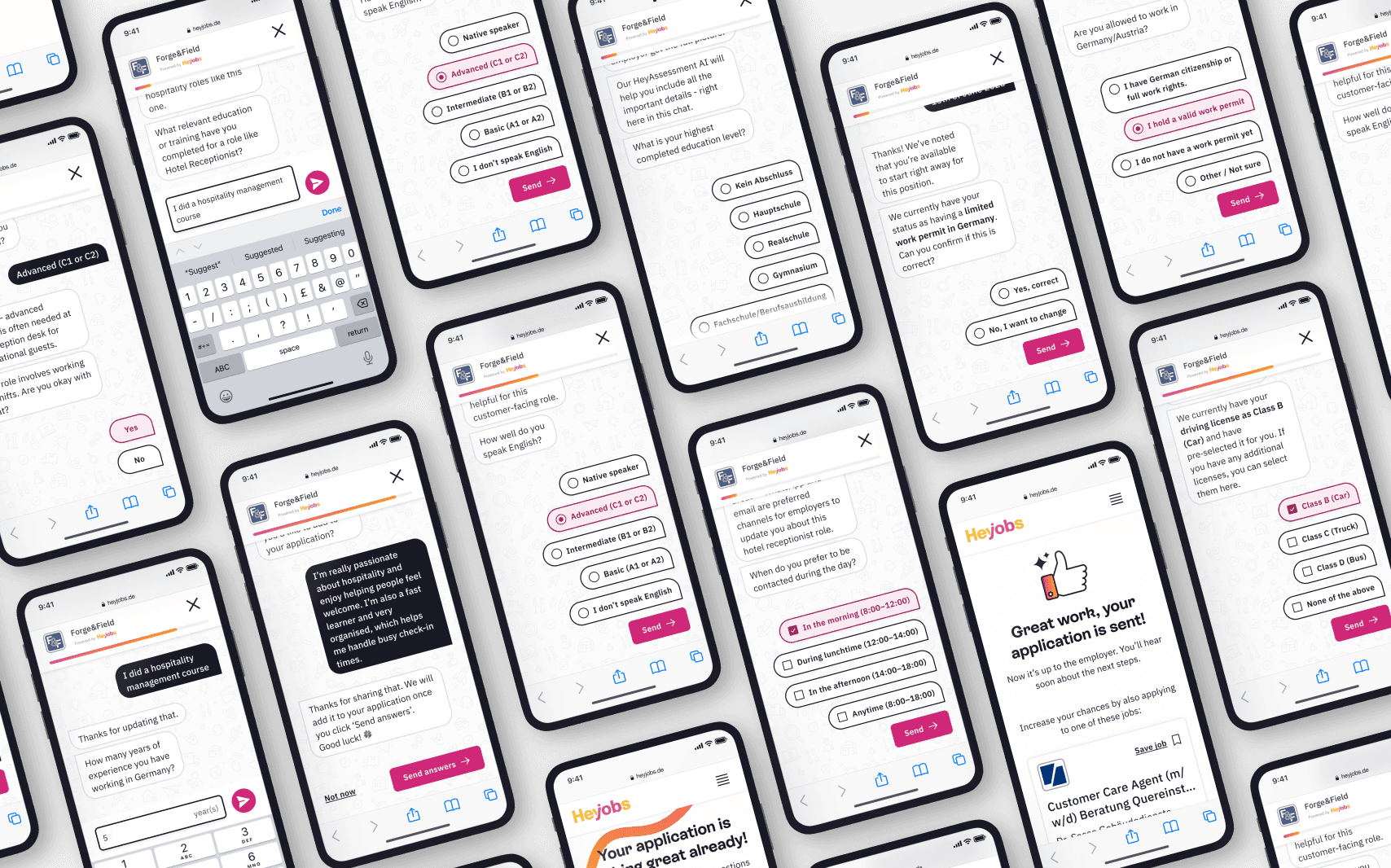

I iterated through multiple rounds, starting broad and getting progressively more specific. The first rounds focused on the overall chat flow - how questions appear, response formats, loading states (especially mimicking a natural typing feel), layout options, progress indicators, and general look and feel. Later rounds zoomed into the details of handling different question types - yes/no, single select, multi select, number input, date input, and free text.

Final rounds refined the finishing touches: background patterns and animations. Throughout, I worked independently and paired closely with front end team.

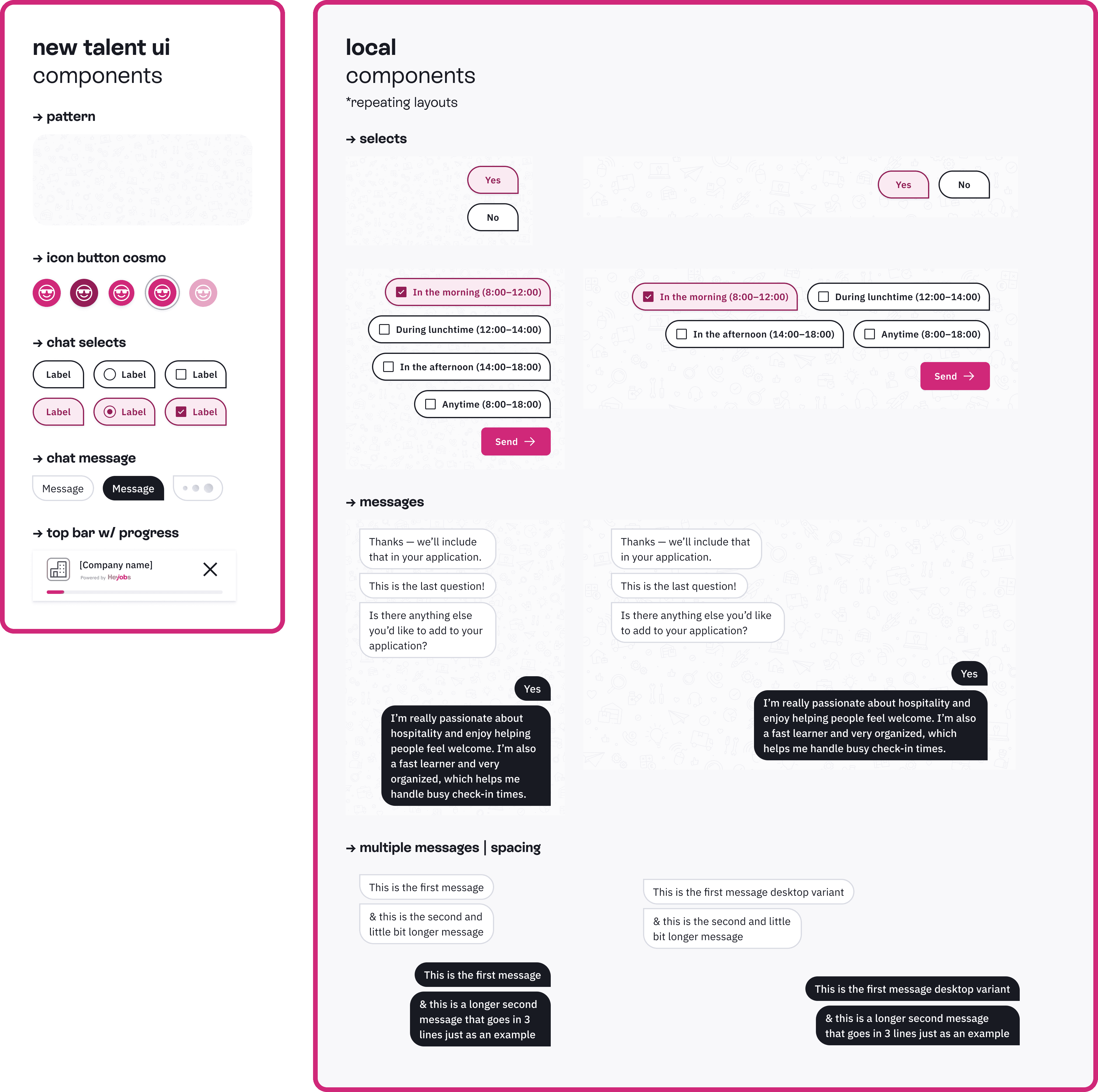

System components & Interactions

I paired closely with my engineering counterpart to figure out the best way to build the system for an intuitive, smooth experience. Rather than starting from scratch, we duplicated our existing select component and created a 'chat select' variant with different styling and slightly adjusted behaviour - prioritising consistency and maintainability. We added a new variant of the existing top bar that included a progress bar, and built new message components with black, white, and loading variants to differentiate between questions and answers. A lot of fine-tuning went into getting the animations right, mimicking a natural typing feel, ensuring text wrapped properly, and making the different layouts and patterns work together as a cohesive whole.

Constraints & Trade-offs

SOLUTION

The Experience

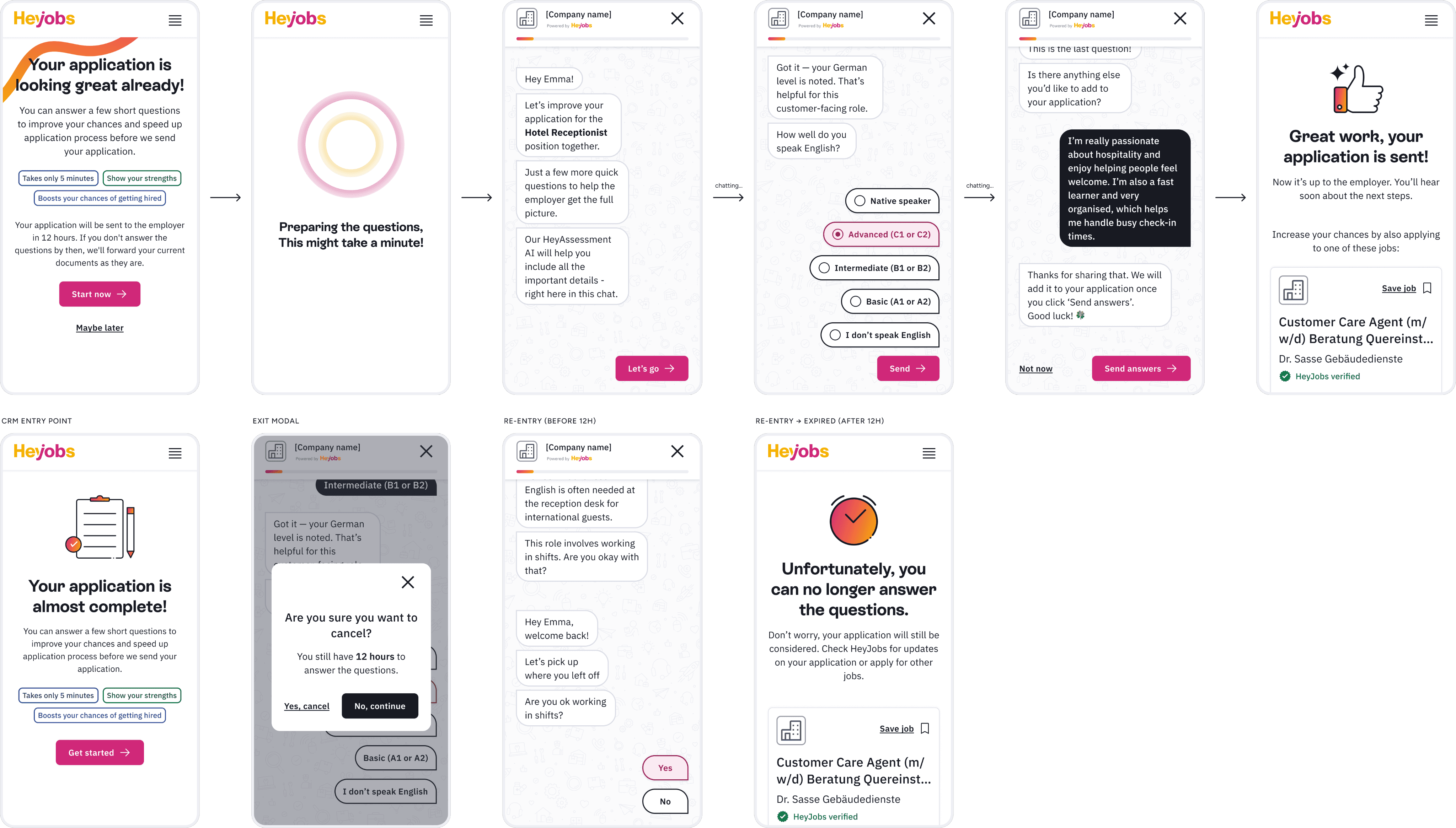

The final design guides candidates through a complete journey. It starts with a landing page that sets expectations: takes less than 5 minutes, highlights your skills, boosts your chances. A short loading screen then signals that the experience is tailored.

The chat opens with a welcome message that explains why the assessment is part of the application and reassures candidates that it is quick and relevant to the job. The conversation leads them through a small set of focused, role‑related questions. Interactions stay simple, mobile friendly, and predictable, so candidates always know what to do next.

After the last question, candidates receive a clear confirmation that everything is submitted. The final screen explains what happens next, who will see their responses, and how this step strengthens their application. The overall flow aims to feel straightforward, fair, and supportive, turning a typical test into a smooth way to show real ability.

IMPACT

Key Metrics & Results

Impact was measured through cohort analysis comparing applications with completed assessments against the baseline.

The data revealed a more telling signal. Candidates who started but abandoned the assessment showed lower quality rates than the baseline cohort that never had one at all. This reframed the assessment entirely: it wasn't a data-collection tool - it was an intent filter. Completion itself was the signal.